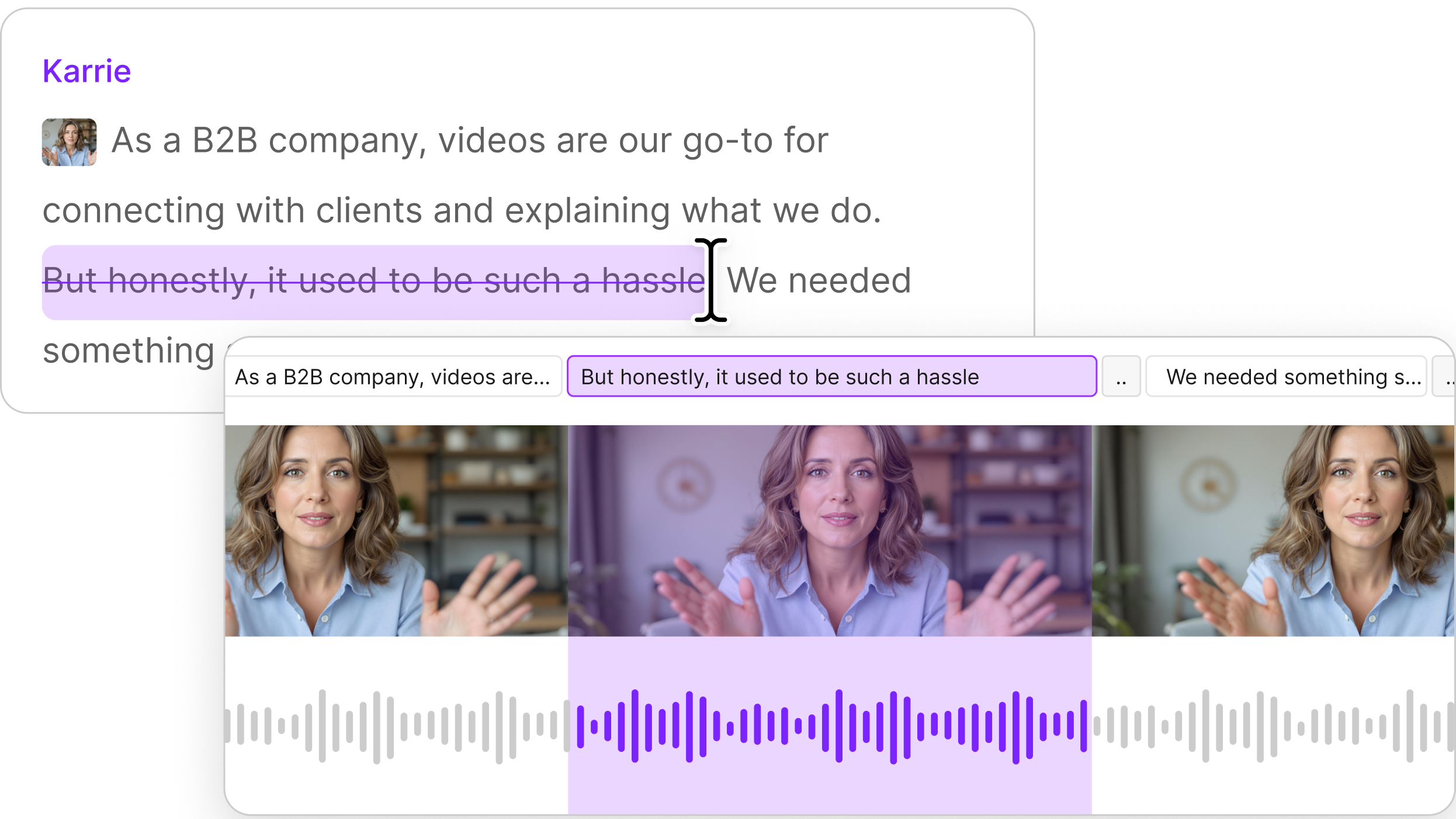

This post continues our series on Automatic Speech Recognition (ASR), the foundational technology that powers Descript’s super-fast transcription.

In recent years there’s been a surge in automatic speech-to-text solutions, most of them offered by tech giants like Google, Amazon, and Microsoft. But which is the best? We couldn’t find many good comparisons, and we needed to do one for ourselves, so we decided to share it.

We have good reason to do this: Descript is API-agnostic, which means we can work with whichever ASR provider gives us and our customers the best combination of speed, accuracy, and affordability. To date that mantle has been held by Google Speech’s ASR engine, which provides remarkable accuracy across a wide range of audio scenarios (this is important, for reasons we’ll get into shortly).

In other words, we like to keep tabs on which service is the best, so that we can give our customers the best service.

To that end, here’s how our test was run, what you should know about it — and the results. The industry is moving quickly, so we’ll continue to test these services (and any new ones that join the fray) in the future.

Our Methodology:

As we mention in our article dedicated to the challenges of testing speech recognition accuracy, speech models are typically measured using audio that’s not representative of how our customers use Descript. So for our test, we pulled samples of audio from YouTube videos that reflect the type of audio transcribed by our customers — primarily:

- Scripted broadcast (like news anchors reading from teleprompters, and audiobook recordings)

- Unscripted broadcast (like podcasts, and TV interview/debate shows)

- Telephone and VoIP calls

- Meetings

We also sought to ensure each category of audio had samples with a variety of different speakers (including some with accents) and topics discussed.

It is perhaps worth noting that the process of sample selection included decision-making at several stages, which inevitably introduces bias. That said, Descript doesn’t have much to gain from running a test that yields inaccurate results (after all, we want to figure out which ASR engine is the best so that we can use it!) Our main bias is probably toward audio that is reasonably intelligible to our ears, but we’ve included plenty of samples recorded in sub-optimal conditions.

We’ve embedded the individual audio files and results in this post so you can decide the degree to which it applies to your own audio.

Generating the transcripts

Once we compiled our corpus of test audio — which totaled about 50 clips, 3–5 minutes of footage apiece — we had each one professionally transcribed; we then conducted a follow-up QA pass to verify accuracy. These professional transcriptions serve as the “Ground Truth”, or the reference transcripts that the automated services are tested against.

Next, we ran each audio sample through each ASR engine using their respective default parameters. Some ASR engines support customization for specific audio scenarios (if you always know you’re dealing with phone calls, you can optimize for that). But Descript users are working with all kinds of audio, so we aim for the most generally-accurate ASR engine.

Finally, we applied the accuracy test widely used by the ASR field, Word Error Rate, to compare the accuracy of the automatically-generated transcript to its professionally-transcribed counterpart. This included a normalization process (typical for this sort of testing) that allowed for certain stylistic discrepancies between the ASR-generated and ‘ground truth’ transcripts — e.g. telling the test that “will not” and “won’t” are functionally equivalent, and that 3 and “three” mean the same thing.

The Results

- Google Speech (Video) — 16% 🏆

- Temi — 18%

- Amazon — 22%

- Speechmatics — 23%

- Trint — 23%

- Microsoft — 24%

- IBM Watson — 29%

- Google Speech (Standard) — 37%

With an average WER of 16%, Google Speech (Video) is the most accurate ASR engine in our testing. For many audio samples, Google’s engine scored a WER well under 10% — as low as 2% for some high-quality audiobook samples.

Also, congratulations to Google on securing… Last place?

Let’s dig into this a little. Google offers several versions of its ASR engine, including one tailored for phone calls and another for brief commands; we tested the two best suited for broad use-cases: Google’s Standard and a newer Video model. Remarkably, in our testing these represent both the worst-performing and best-performing ASR engines.

Google says the Standard model is best for general use, like single-speaker long-form audio; Video is supposed to be better at transcribing multiple speakers (and videos). But in our testing we found that Videois much better at everything — even audiobook samples with a single speaker (in theory, the sort of content Standard should excel at).

This isn’t entirely surprising: the Video model is newer, and Google charges twice as much for it. But it goes to show just how quickly the field is moving.

Another intriguing result: second place in our test goes to Temi, the automated transcription engine made by Rev — a company best known for its human transcription services. This is a laudable achievement, given that Rev is a much smaller company than competitors like Google, Microsoft, Amazon, and IBM. And it’s a positive sign that ASR won’t necessarily be dominated by the tech giants, despite their expansive datasets and resources.

There’s only a 2% difference between Google Speech (Video) and Temi, which may not be statistically significant — so we decided to see what happens when you take a closer look comparing the two. Among our findings:

- In a head-to-head matchup, Google Speech (Video) wins on 31 of 46 samples, or 67%.

- On the samples where Google is more accurate, it tends to be more accurate by a wider margin than when it loses. So in the files where Google wins it has an average of 22% fewer errors per file. Where Temi wins, it has an average of 8% fewer errors than Google.

Some additional trends we found, overall:

- Google’s Video model wins easily when tested on samples with relatively low WERs (which tend to be high-quality audio).

- The audio with the worst accuracy rates tends to involve considerable overtalk (people speaking at the same time). This is a very difficult challenge, but one we expect these engines to improve at over time.

With all of that said, congratulations to Google Speech on scoring top marks in our tests. We’ve been using the Google Speech (Video) model to power Descript since we launched last December—with great feedback, mostly — and we plan on sticking with it.

The Samples

If you’re interested in seeing the actual data we used to conduct these tests — or filter down to the see the results for a particular ASR engine or the audio that’s most similar to yours — the embedded Airtable below has everything you need.

Caveats

We’ve mentioned most of these already, but for the sake of clarity:

- This is based on audio that’s representative of the types of audio our customers upload. Different types of audio may yield different results. So could modifying the configuration of the respective ASR engines.

- This is English-language only.

- WER is a flawed metric. It treats all errors equally, when they impact readability differently.

- We’re not considering speaker labels or punctuation, because WER ignores those.

Further Reading

Tim Buncehas written a series of posts about his own testing of ASR engines. His approach differs in some important ways and his insights are interesting.

Also see our other posts about automatic speech recognition, including:

- The History of ASR

- The Current State of ASR, with Kaldi’s Daniel Povey

- Challenges in Measuring Transcription Accuracy

Special thanks to Arlo Faria and the team at Remeeting. Arlo was kind enough to give us substantial guidance on running our tests.

Also many thanks to Scott Stephenson of Deepgram for sharing his insights.

Have thoughts or feedback regarding our tests? Please leave a note in the comments or send us an email.