Learning how to mix podcast audio is equal parts an art and a science. You can learn all you want about the physics of decibels and ohms, but what sounds crisp to your ear might be a little tight for someone else, and a conversation that projects gloriously on high-quality speakers might have nuances that get lost in the noise surrounding a pair of earbuds. Luckily, there’s lots to do before you get to that point. All raw audio can be improved substantially with some simple steps, which can give your podcast a much more professional sound.

That’s particularly important when there are so many shows out there competing for attention. You don’t want yours to come off as something that doesn’t matter to you — and shouldn’t matter to anyone else. Plus, for every one of those semi-distracted listeners on AirPods, there will be someone with the newest set of Sony WH-1000XM5’s picking up on background noises and aggressive plosives. Headphone and speaker technology improves constantly, which means your podcast audio quality needs to keep up.

So we turned to the Christians — that’s Christian Dueñas, an editor and producer at Maximum Fun, as well as Christian Koons, a former producer at Song Exploder, to learn about their production processes as podcast audio engineers. Here’s their guide to podcast mixing.

What to do while recording your podcast

First things first: Koon and Dueñas both emphasize that the most important thing is the original audio file itself. All audio needs some mixing to be its best, but the cleaner your original is, the easier your job will be. So try to make sure you and any other hosts and guests are aware of best practices when you record. Some quick tips on that:

Record in a quiet space

We know most people don’t have a dedicated podcast studio in their houses, and using a professional one can be more than you can afford, especially in the early stages. But the more stuff your recording has going on in the background — AC or refrigerator noise, motorcycles gunning it down a busy street, neighbors chatting, or even birds chirping — the more you’ll have to find a way to clean up when you’re mixing.

Record separately if possible

Having each voice on your podcast lay down a separate audio track will make your life substantially easier when it comes to mixing sounds. Separate tracks allow an editor to move around pieces of overlapping crosstalk, as well as to isolate issues in individual tracks so that they don’t bleed out to everyone’s vocals. And speaking of bleeding out…

Wear headphones

Another sound that can muddy your audio quality is reverb and echo from someone else’s voice in the room, or coming out of your own computer’s speakers. Headphones mean you can hear each other, but your microphone just hears you. That way what ends up on your tape is just the sound of your own beautiful voice.

Use the right microphone

Sounds obvious, right? If the thing that’s picking up your sound isn’t well-designed, the file you record will be flat, tinny, or lacking nuance, and as hard as it is to take sound out, it’s even harder to put it back in. Check out our picks for some of the best gear here.

Check the audio levels

Is your guest murmuring into their mic, yelling, or alternating between the two? Take a second to verify that your equipment is picking up enough — but not too much — before you get into the meat of the conversation. This is especially important because you can’t always trust your headphones. If they’re turned up loud, you may be able to hear something that your tape ultimately can’t.

Talk with emotion

Okay, this one’s more qualitative, but it still matters a lot. Don’t let stage fright get the better of you! One of the benefits of listening to a podcast over reading an article is that human quality of hearing someone’s expressive, engaged voice as they read or talk. So give us the full range of how you’re feeling — your audience will really respond when you do.

How to mix your podcast in post-production

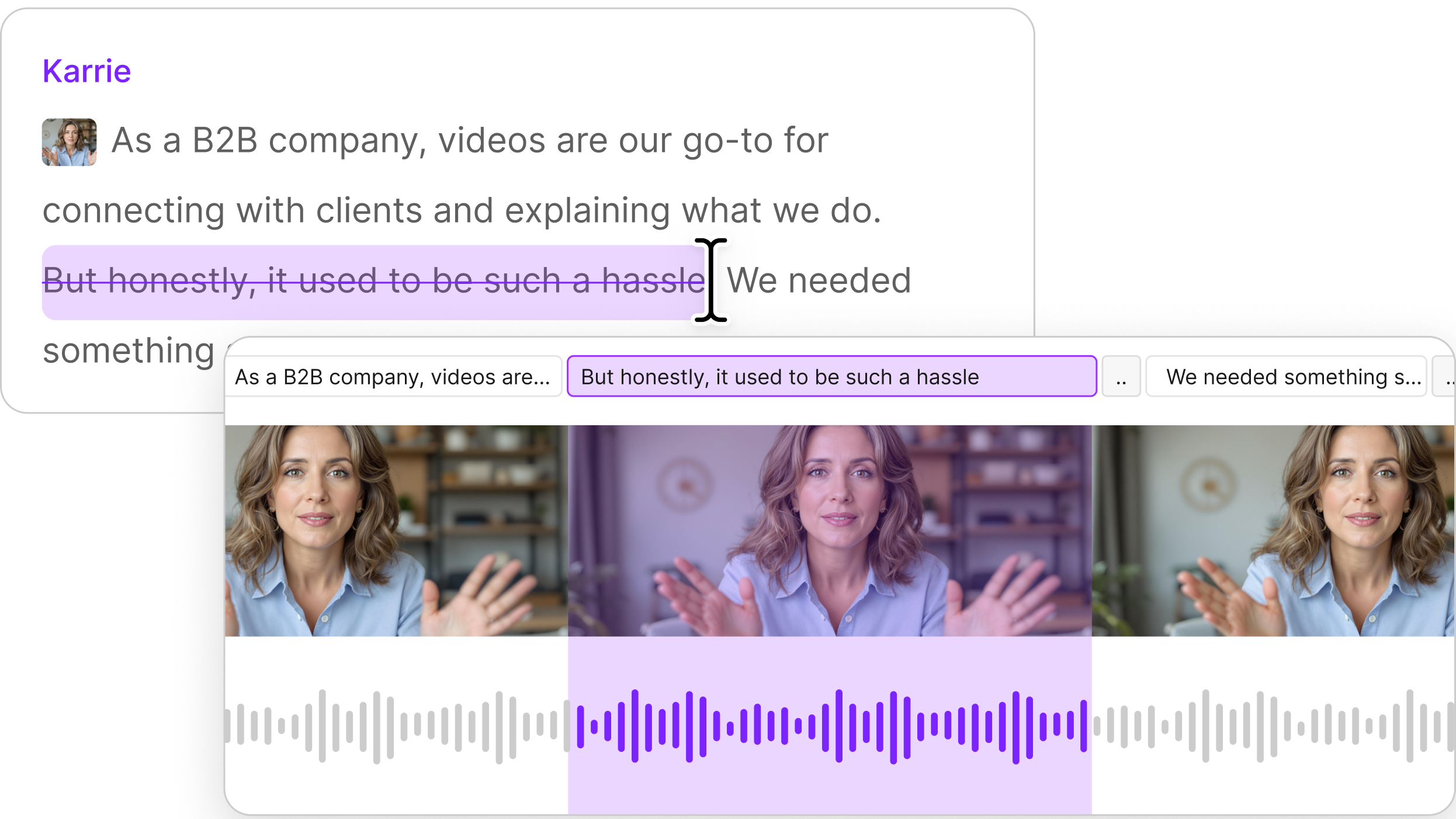

Once you have reasonably clean audio, if you’re in a hurry (or don’t want to learn the ins and outs of podcast production) you can always apply Descript’s Studio Sound to your files. Using AI, Studio Sound will enhance the sound of speakers’ voices while also removing unwanted reverb, echo, and other artifacts that will distract listeners from your brilliant content.

But if you want to get more into the weeds — and have more fine-grained control of your sound — you can separate out those processes and do them yourself.

Organize

The first thing is to organize and categorize your tracks. Even if you only recorded one person talking, you’ll still likely be adding in some intro and outro music at a minimum. So the first thing you’ll want to do is put all of your vocal tracks where they belong, and add in any other tracks you’ll be needing. That way you can look at and listen to the waveforms to see what you’re dealing with across the spectrum. Are some much louder than others? Does one have background noise that needs to be taken care of with a noise reduction tool?

At this point, Koons recommends grouping some of your tracks in whatever program you’re using, particularly if they’re two halves of a conversation. Grouping means that any section you delete from one clip will also come off the other one — keeping them perfectly synced throughout.

Loudness meter

This is one of Koons’ favorite tools. It seems like you should be able to understand how loud something is just by listening to it — but of course, that loudness is mediated by your headphones or speakers. Instead, a loudness meter measures loudness in LUFs (loudness units, wouldn’t you know) as well as decibels. Many podcasters aim for around -16 LUFS as a target loudness, which helps ensure a consistent volume across different platforms.

Compression

Now that you know how loud your different tracks are, the next steps are about making their volume and sound match one another, so that it’s not jarring when the host stops speaking and the guest chimes in.

Compression is often the first step. It’s an audio process that makes the loudest parts of your track quieter, and the quietest parts a little louder, pushing everything towards a nice middle space. That smaller range of sound means you lose a little bit of nuance, but it also means that listeners don’t have to turn the volume down when hosts get excited about something — only to crank it back up when the conversation turns serious again.

Descript includes a compressor tool, of course — you can go into each frequency manually, or you can try out some presets while you’re figuring out what works best for you and your audio, as well as your taste. You can even apply different versions of the compressor tool to different tracks within the podcast, allowing you to think through what each individual piece of audio needs to sound its best. Learn more about how all of that works here.

Equalizing

Equalizing is a bit more complicated. A good EQ tool is also a way to turn the volume up and down on sound — but instead of applying to everything, the way a compressor does, equalizing is about picking out specific frequencies and either making them louder or quieter. So if there’s a low hum in the background — an air conditioner or refrigerator, let’s say — you can use the equalizer just on that frequency and lower or eliminate it from the conversation.

Again, Descript allows you to EQ in-house. Just like with compressing, the tool offers you the option to see each individual band of frequency in a recording and adjust it manually, or use one of several presets if you don’t have the time (or patience!) for that kind of work.

Ducking

When you start adding in music, things can get more complicated, since the frequencies in a piece of music can make it hard to understand the dialogue. That’s where ducking comes in — it automatically lowers the volume of other tracks when you want one track (usually the speaking track) to be heard clearly.

Descript does this slightly differently than other software. If you’re using a traditional digital audio workstation (DAW )like Audacity, Adobe Audition, or Pro Tools, you’ll set your music tracks to duck whenever there’s speaking in your dialogue track. In Descript, it’s the opposite: you’ll choose ducking in the effects of your speaking track in order to lower the volume of all other tracks. Here’s a step-by-step guide to ducking in Descript.

Fades

If you’re using music behind a conversation, don’t forget to make it fade in gradually. A lot of people assume that music should be loud, says Koons, but the key here is actually consistency.

“You don't want to have the talking be one consistent level for a couple minutes and then music come blaring in,” he says. “People are just gonna be reaching and turning down their stereo super quickly if that happens. You don't want that. You want someone just be able to set it and forget it, basically.”

Do a little extra cleanup

Once Dueñas has the volume and dynamics of the sound cleaned up, he goes in and starts listening to individual words, and even specific letter sounds. You might also consider using a noise gate — a plugin that automatically mutes quieter, unwanted audio so only your speaker’s voice remains. “I used to work with somebody who had a gap in their teeth,” he says, “and on an s, the air would sharply hit the microphone. So I used this plugin that shows you a line graph, basically, so you can see, visually, this frequency is pretty gnarly here. Then you can notch that down. You can find those specific like s’s and f's and stuff like that.” This is the kind of finessing that will really set your work apart from amateur editors.

Make some presets

One last piece of advice: Dueñas notes that since he works with the same hosts regularly, he has a package of pre-set tools — and settings within the tools — that he tends to use for automation. That has to do with mic quality and room tone as well as the hosts’ personal preferences. “Traci, for example,” he says, referring to Traci Thomas of The Stacks, “She has a mic that picks up a lot of the low end, which makes her voice sound a little deeper than it actually is. I notch that down a little bit just to make her voice sound more natural. Some people record in a very big room with high ceilings, so I have a preset made to take away some of that reverb. It doesn't need to be super in depth — it’s just a useful baseline for when the deadline is tight.”

Frequently asked questions

How can I equalize my podcast audio for clearer voices?

Use an equalizer (EQ) to remove unwanted low-end rumble and gently boost the vocal frequency range. A basic high-pass filter set around 80–100 Hz helps cut out room noise. Slightly lowering “muddy” frequencies (somewhere between 200–400 Hz) can also improve clarity. Many creators also add a small boost between 2–4 kHz to brighten dialogue.

How do I balance multiple voices in a podcast mix?

Put each speaker on a separate track so you can adjust levels independently. First, aim for consistent loudness across all voices. Then use light compression or Descript’s Studio Sound to even out vocal peaks. Finally, listen back on different devices to confirm that everyone’s voice is clear and at a similar volume.

Do I need special hardware or a mixer for podcasting?

You can produce professional audio with just a microphone and software. A hardware mixer can be handy if you prefer hands-on control or if you have multiple sources to manage live. But many modern podcast editors (like Descript) provide robust mixing features without extra gear.

How can I make my recorded audio sound more “podcast-ready”?

Record in a quiet room, use headphones to prevent echo, and consider light post-production. Clean up filler words or background noise, then apply mild compression and EQ to smooth out the sound. Descript’s Studio Sound can also remove reverb and boost vocal clarity with one click.