Few recordings of human speech are recorded “perfectly” — that is to say, inside an acoustically treated studio, with a high-quality microphone, and without external noise or interference. Most are recorded imperfectly, with varying degrees of background noise and unwanted sound. Maybe there’s an air conditioner in the background or line-level fuzz. This is known as room tone, and when it comes to editing audio, dealing with room tone can be challenging and costly, requiring the use of dedicated plugins and software.

Today, Descript is proud to announce the release of a feature we’re simply calling Room Tone, which uses AI to automatically detect room tone in any given recording of speech — and then generates that tone when changing the timing of that recording. Need to add a gap clip between two sentences or paper over an edit without jarring dead air? Room Tone allows you to do that without a second thought (or even a second click).

Listen for yourself. This is a recording of Descript CEO Andrew Mason, edited with a gap clip of several seconds to adjust the timing.

The edit is dead silent — and painfully obvious. Now listen to this version, with Room Tone enabled.

That sounds much better!

And the more we started playing around with Room Tone, the more we realized it could do. Here’s a completely unedited clip of David Attenborough narrating as a pride of lions prepares to go on the hunt — followed by the exact same clip, after some editing and processing with Room Tone. Watch (and listen) for yourself!

We think Room Tone is pretty cool, and wanted to share with you some of the behind-the-scenes details that made it possible. Interested in working with a dedicated team to solve problems like this yourself? We’re hiring.

The inspiration

Our inspiration for Room Tone came from a particularly elegant research paper, a regular occurrence at Descript. Titled “Sound Texture Perception via Statistics of the Auditory Periphery: Evidence From Sound Synthesis” (Neuron 71, 926-940, 2011), it was authored by Josh H. McDermott and Eero P. Simoncelli. “It’s a beautiful paper — my favorite research paper of all time, actually,” says Prem Seetharaman, Research Scientist at Descript, who designed our Room Tone feature.

In their paper, McDermott and Simoncelli describe a statistical model for interpreting “sound textures,” which they describe as “the collective result of many similar acoustic events” — rainstorms, insect swarms, or galloping horses, for example.

With these statistical models in hand, McDermott and Simoncelli generated synthetic sound textures by contouring white noise frequencies to match natural sound texture models. Their contoured results were practically indistinguishable to the human ear from the natural phenomena they were modeled after, indicating that “sound texture perception is mediated by relatively simple statistics of early auditory representations,” which are then interpreted by the brain as representing a particular phenomenon.

In other words, you can think of McDermott and Simoncelli creating synthetic sound textures like sculptors working with a block of marble — except their raw material is white noise. You chip away at the block (or white noise frequencies) until you get the actual recognizable structure you want.

The idea

When conceiving the Room Tone feature, we hypothesized that room tone in audio recordings could be considered a “sound texture” analogous to those described in McDermott and Simoncelli’s research, like crackling fire or buzzing bees.

Following McDermott and Simoncelli’s results indicating that auditory statistics determine how we perceive sound textures, if we were able to create a statistical model of room tone in a given recording, we could filter white noise according to that model to create a synthetic sound texture indistinguishable from the natural room tone — even if the waveforms were different.

With this hypothesis in hand, we conducted further research and prototyped several models before narrowing the results down. Given a transcribed audio asset, we first automatically identify sections where only room tone audio is present. We then analyze those sections to create a room tone model. That model is then used to shape white noise according to the first order statistics of the model, and the results sound perceptibly identical to the room tone present in the original recording.

This all happens on the back end, calculated whenever a user uploads an audio recording into a Descript Project.

The implementation

After developing an effective model, we worked collaboratively to implement room tone generation into the Descript app and surface the functionality to users.

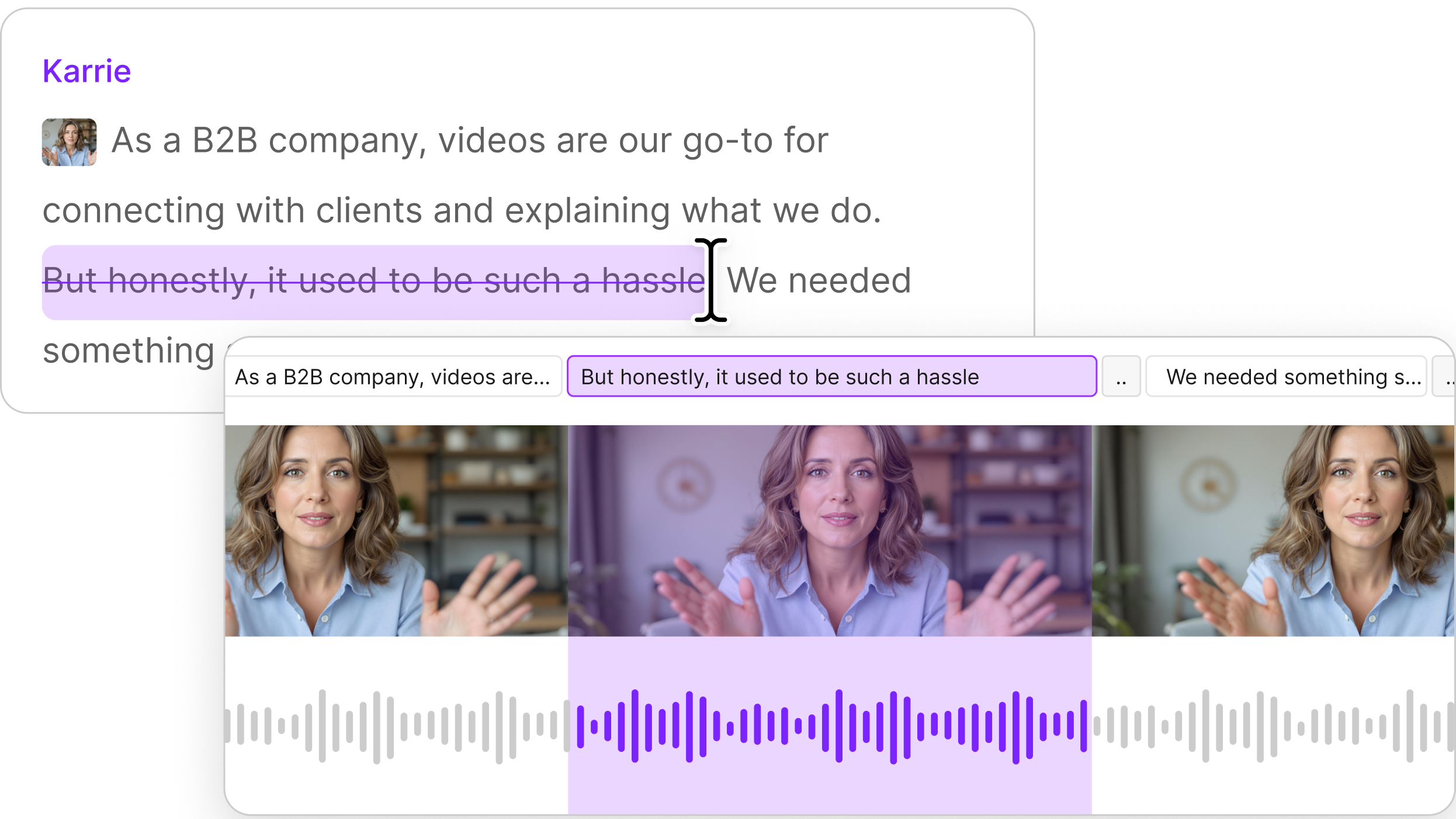

Engineers from the editor and backend teams joined researchers and designers to consider how best to allow users to access Room Tone. Iterating quickly, they landed on a simple and elegant solution: It should trigger automatically whenever a gap clip is inserted into a Descript Project.

Whenever we build products, our goal is to make our users’ lives easier and their media editing workflows simpler — and even fun. Accordingly, user feedback is intrinsic to our development process.

After an early release of the feature to our beta user group, users reported key feedback that the gain of the generated room tone was an issue. We conducted further experiments based on this feedback, and adjusted the algorithm to fix this issue. The result is a seamless, friction-free experience — which means dealing with room tone is one less thing you need to think about when editing media in Descript.

Beginning today, you can try Room Tone yourself. To confirm the feature is enabled, click the drop-down arrow next to the name of your Project in the Project Browser and choose Project Settings.

To add a gap clip, simply click and drag the wordbar in the timeline wherever you’d like to adjust timing. Room Tone will take care of the rest, so you can say goodbye to dead-silent edits.

Want to help us solve problems like this one?

If you’re interested in creating elegant, simple solutions to tricky problems like this one, we want to work with you! Descript just closed a Series B round and we’re growing our team, looking for smart, creative people to help us make powerful media editing tools accessible to everyone.

We hope you enjoyed this glimpse behind the scenes — go download Descript if you haven’t already and give the Room Tone feature a try for yourself!